Test Runs

Overview

Test Runs allow you to systematically evaluate your prompts against entire datasets to measure performance, quality, and consistency. Run automated tests to compare different prompt versions, models, and configurations before deploying to production.

Creating a Test Run

- Click + New Test

- Select LLM Provider and Model

- Select your Prompt

- Select your Dataset

- Click Add Evaluator to configure evaluation

- For each evaluator, choose an output column if needed

- Toggle Quick Run to test 20 items (optional)

- Click Advanced Settings to adjust temperature and max tokens (optional)

- Click Run Test

Monitoring Progress

- Test runs start in Preparing status

- Status changes to Running with live progress updates

- Results update in real-time

- Click a running test to view individual results as they complete

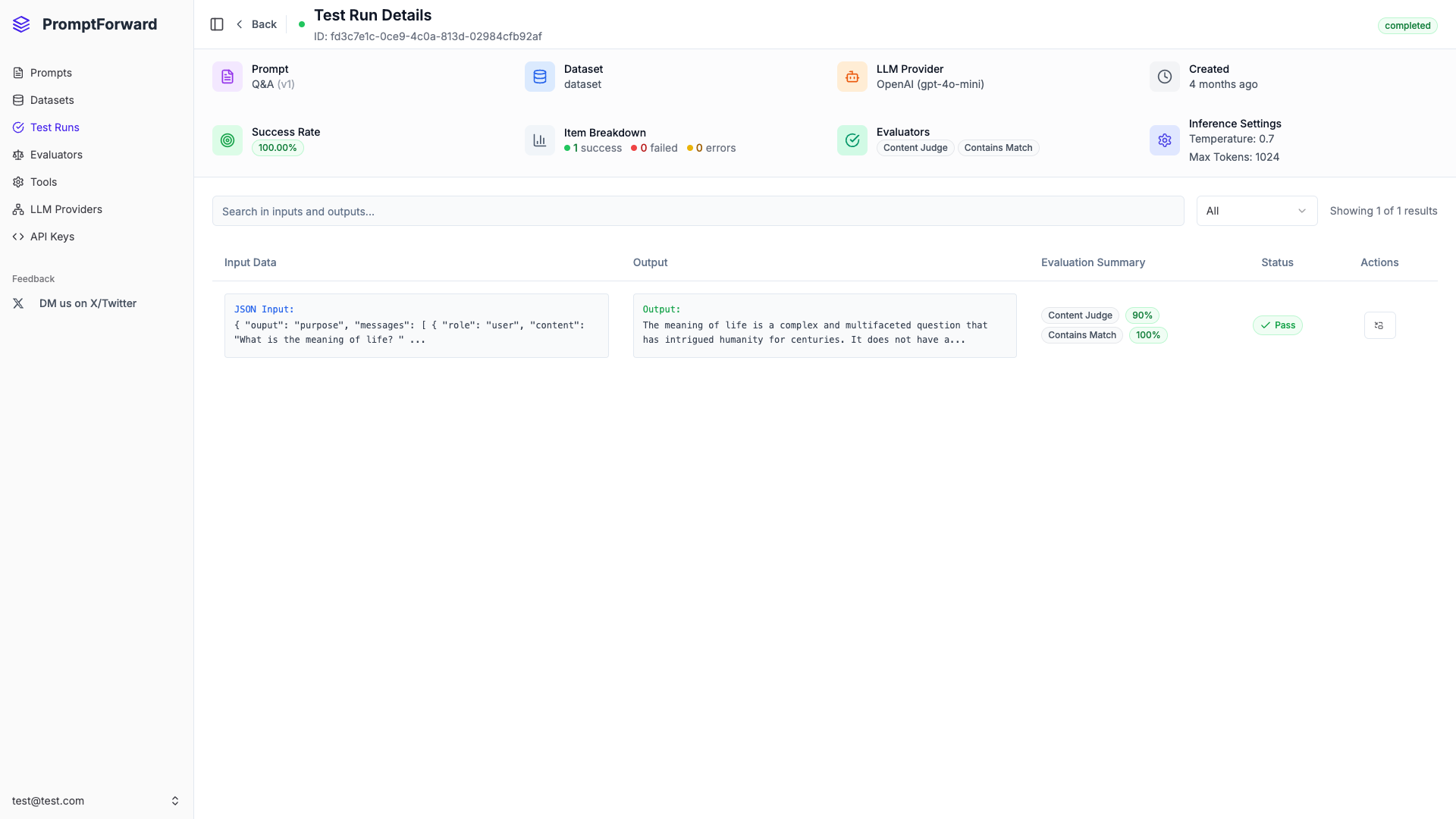

Viewing Results

- Click a completed test run

- Review the success rate and metrics

- Scroll through test items to see individual results

- Click any item to view full details, evaluation scores, and reasoning

- Use Previous and Next to navigate items

Filtering Results

Use the Filter dropdown to show:

- All - All test items

- Completed - Successfully completed items

- Failed - Items that failed evaluation

- Error - Items with inference errors

- Pending - Items not yet processed

- Running - Items currently being processed

Exporting Results

- Find a completed test run

- Click the Download icon

- CSV file downloads with all results, scores, and reasoning

Best Practices

- Start with Quick Run for rapid iteration

- Use multiple evaluators for comprehensive assessment

- Maintain representative datasets

- Track success rates over time

- Review failed items to understand patterns

- Set realistic inference settings

- Export results for deeper analysis