Getting Started

Set up PromptForward and run your first prompt test in minutes.

Key Concepts

Before diving in, let's understand the core components of PromptForward:

Prompts

Prompts are the instructions you give to AI models. In PromptForward, prompts include system messages, user messages, and variables. Each time you save changes, a new version is created, allowing you to track and compare improvements over time.

LLM Providers

LLM Providers are your connections to AI services like OpenAI, Anthropic, Groq, or AWS Bedrock. You configure providers with your API credentials so PromptForward can execute prompts using various models.

Datasets

Datasets are collections of test cases. Each row contains input data (like user questions or chat messages) and optionally expected outputs. Datasets let you systematically test your prompts against many scenarios at once.

Evaluators

Evaluators automatically score AI responses. System evaluators use exact matching, contains matching, or regex. Judge LLM evaluators use another AI model to assess quality based on custom criteria like tone, accuracy, or completeness.

Test Runs

Test Runs execute your prompt against every item in a dataset, applying evaluators to each response. They provide detailed results showing success rates, individual scores, and help you identify areas for improvement.

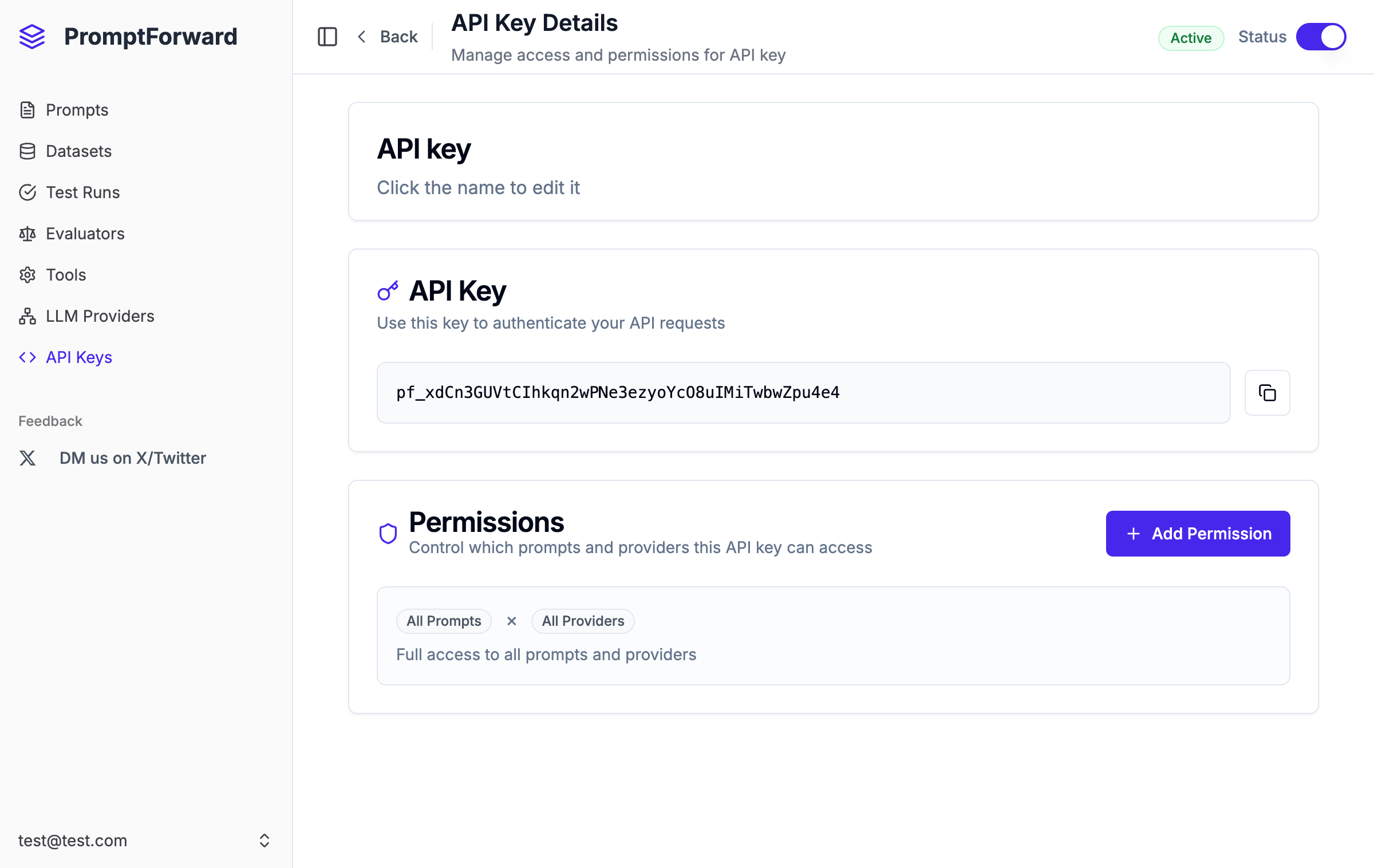

API Keys

API Keys enable your applications to access PromptForward prompts programmatically. You can set granular permissions to control which prompts and providers each key can access.

Quick Start

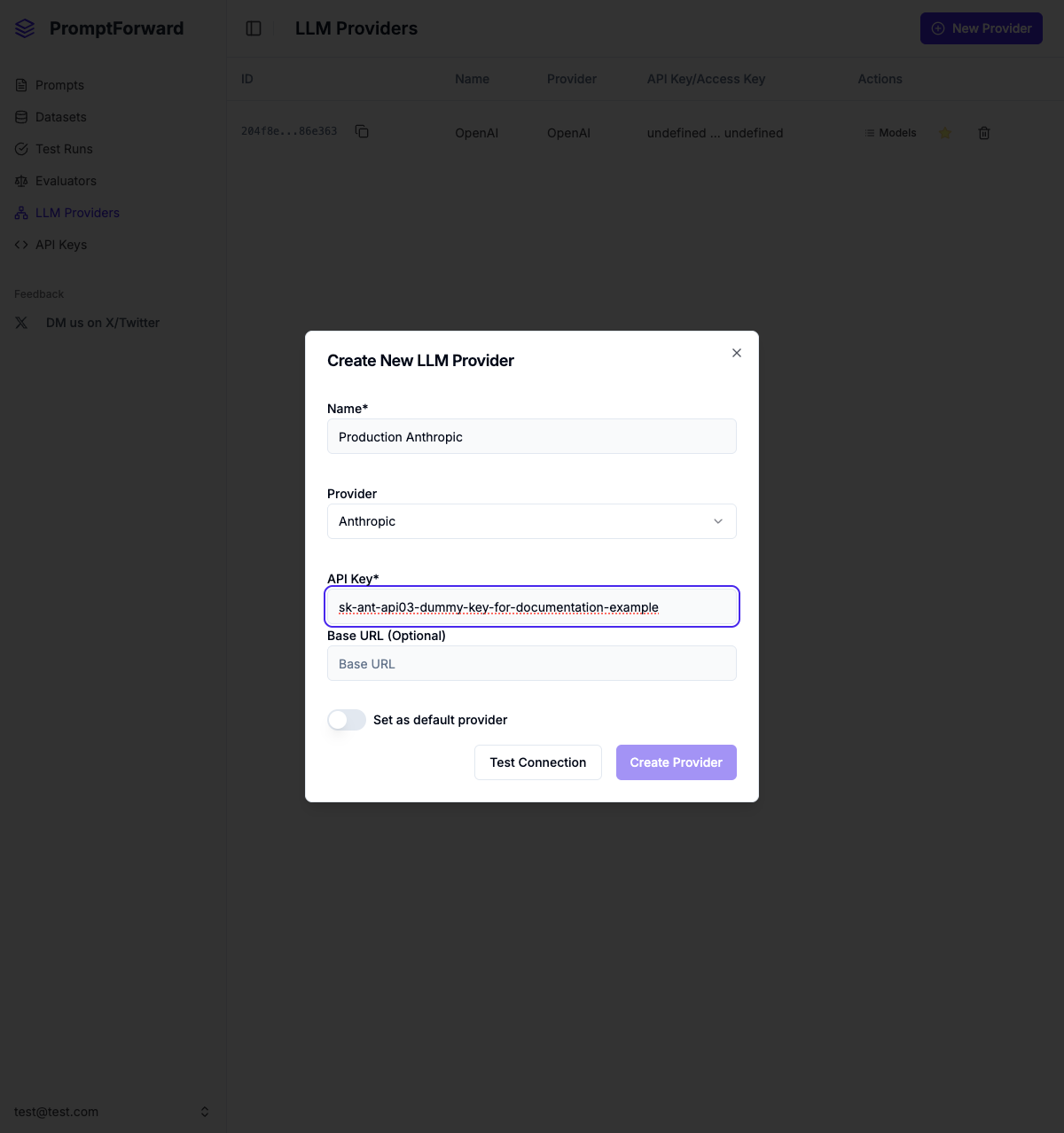

1. Configure an LLM Provider

Before testing prompts, connect to an AI service:

- Navigate to LLM Providers in the sidebar

- Click + New Provider

- Enter a name (e.g., "Production OpenAI")

- Select your provider (OpenAI, Anthropic, Groq, or AWS Bedrock)

- Enter your API credentials

- Click Test Connection to verify

- Click Create Provider

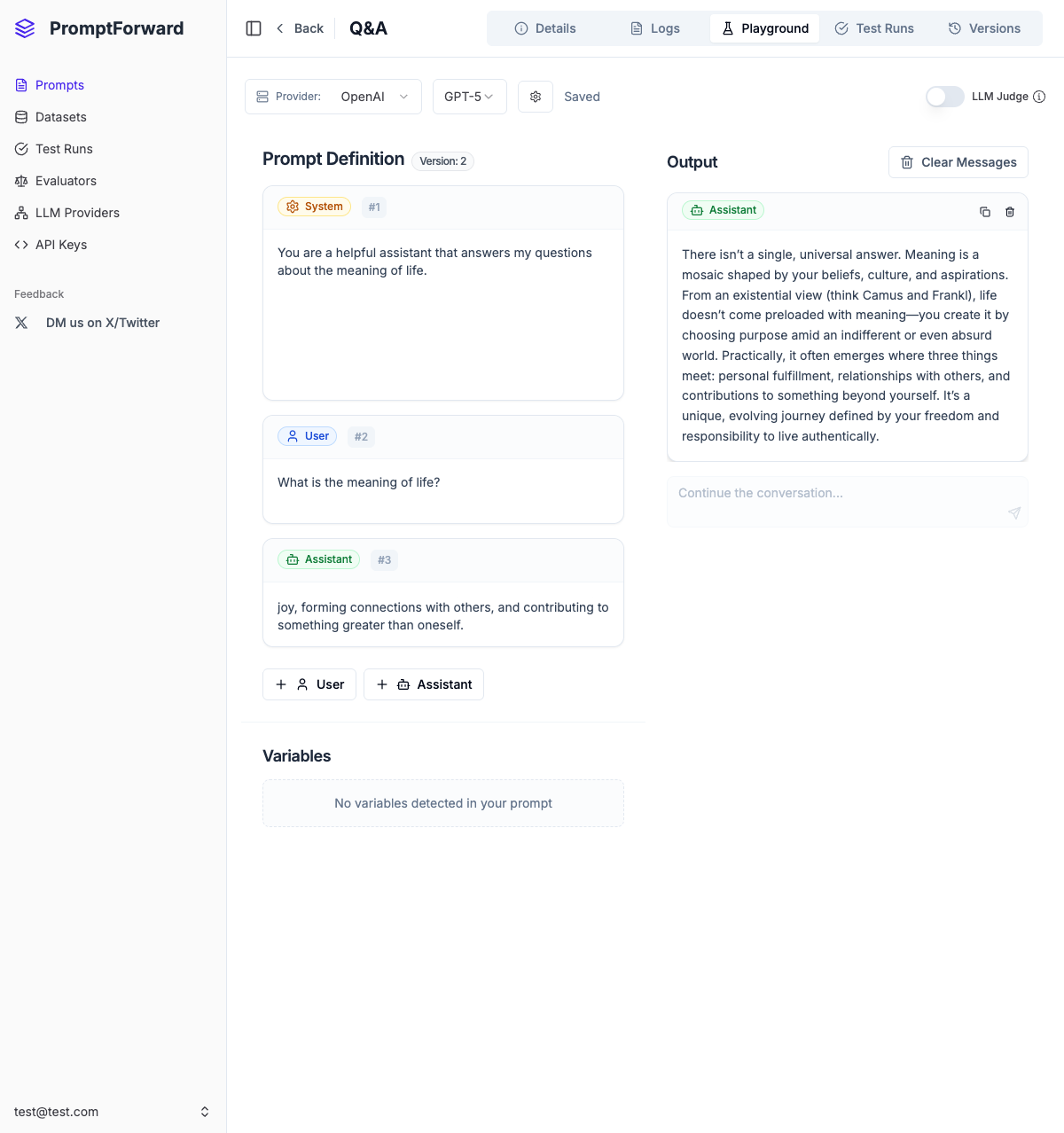

2. Create Your First Prompt

- Navigate to Prompts

- Click + New Prompt

- Enter a name and description

- Click Create Prompt

- Go to the Playground tab

- Select your provider and model

- Edit the system message with your instructions

- Use

{{variable_name}}for dynamic inputs - Click Run to test

- Click Save to create version 1

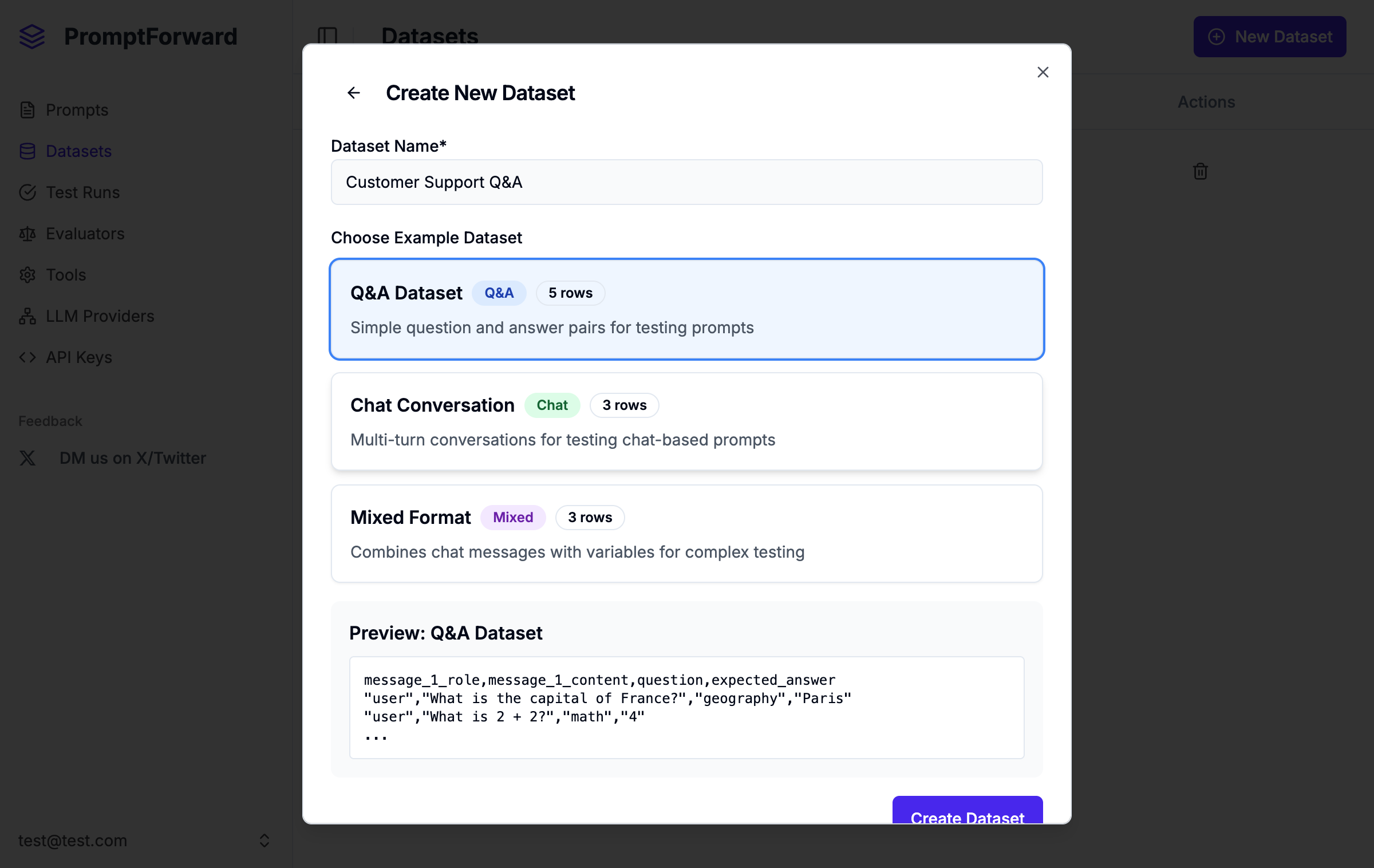

3. Create a Dataset

- Navigate to Datasets

- Click + New Dataset

- Choose to use an example, upload CSV, or start empty

- Enter a dataset name

- Click Create Dataset

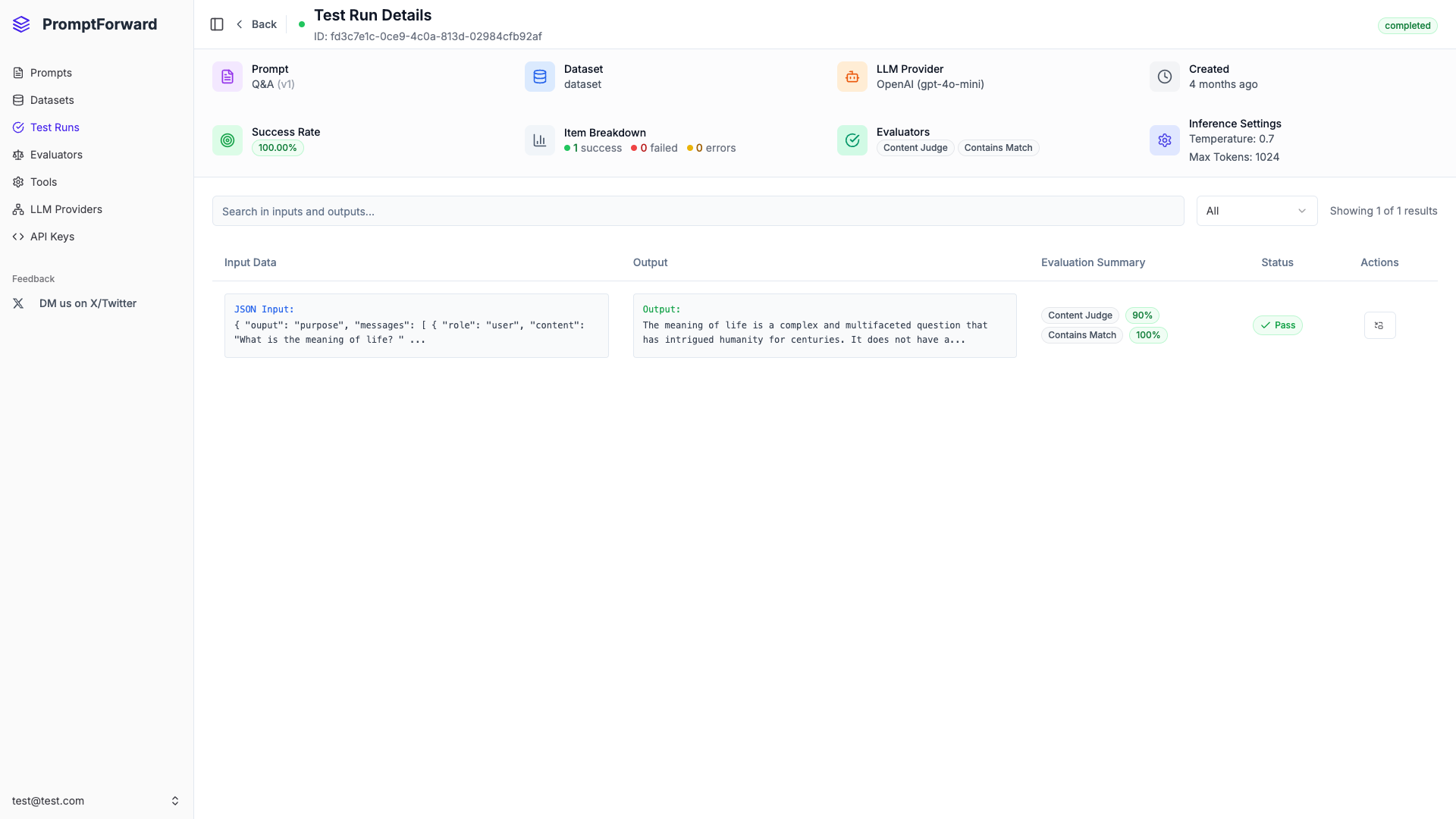

4. Run a Test

- Navigate to Test Runs

- Click + New Test

- Select your provider, model, prompt, and dataset

- Add evaluators to assess responses

- Toggle Quick Run for a 20-item test

- Click Run Test

5. Integrate with API

- Set your prompt version as Live in the Versions tab

- Navigate to API Keys

- Click + New API Key

- Add permissions for your prompt and provider

- Copy the API key and use it in your application

Next Steps

Explore the feature documentation to learn more:

- Prompts - Create and manage prompts with versioning

- Datasets - Build test datasets

- Evaluators - Configure quality assessment

- Test Runs - Run batch tests

- LLM Providers - Connect to AI services

- API Keys - Integrate with your apps