Prompts

Overview

Prompts are the core building blocks of PromptForward. A prompt defines the instructions and context you provide to an LLM to guide its responses. PromptForward helps you create, test, version, and optimize your prompts through an intuitive interface and powerful evaluation tools.

Creating a Prompt

- Click + New Prompt in the top right

- Enter a descriptive name

- Optionally add a description

- Click Create Prompt

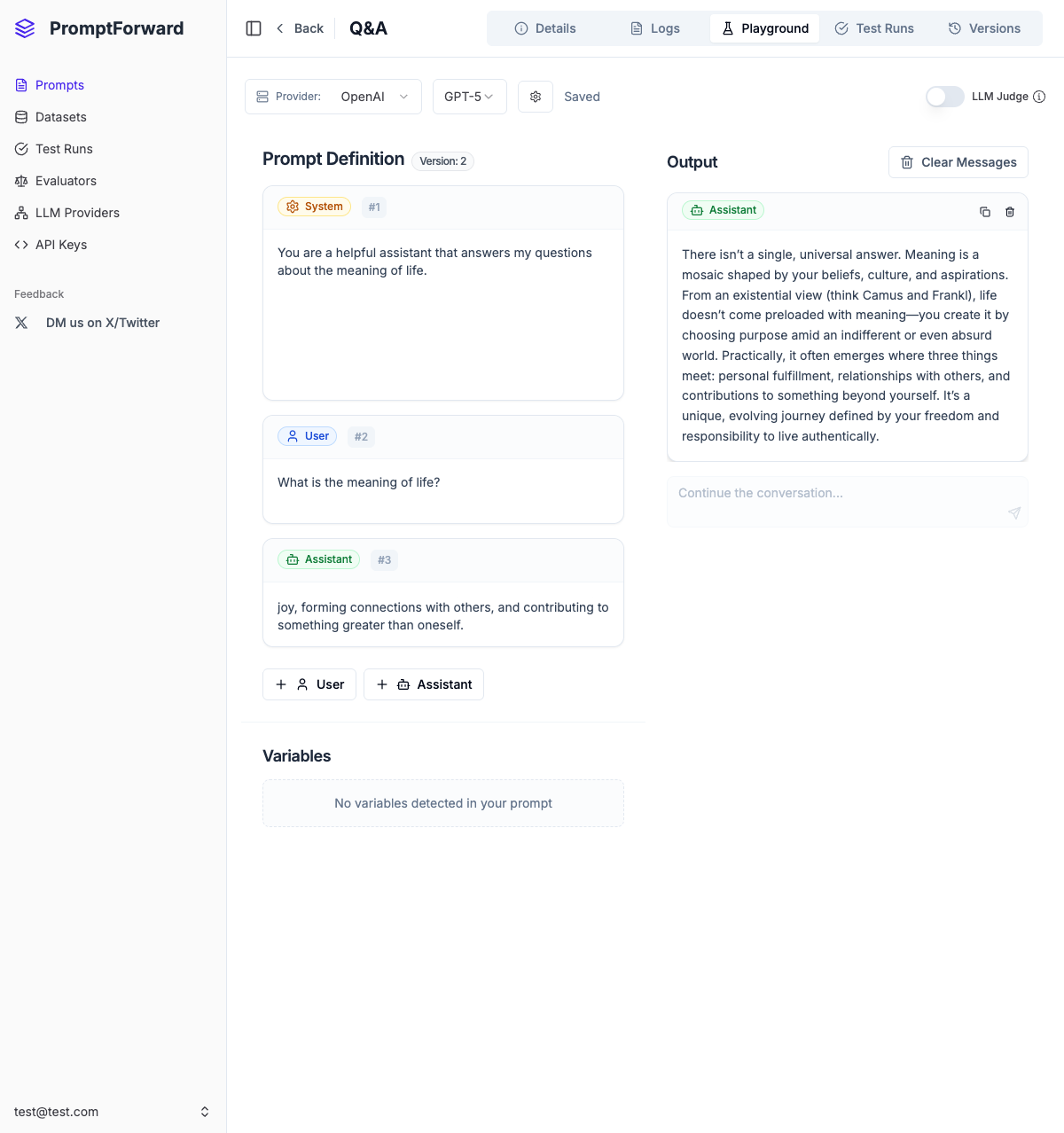

Using the Playground

The Playground provides an interactive environment for crafting and testing your prompt:

Configuration

- Provider & Model - Select your LLM provider and model

- LLM Judge Toggle - Enable when creating evaluation prompts

- Model Parameters - Adjust temperature (0.0-2.0) and max tokens

Messages

- System Message - Defines the AI's behavior and role (cannot be deleted)

- User Messages - Represent input from the end user

- Assistant Messages - Used for few-shot learning examples

Variables

Use double curly braces for dynamic content: {{variable_name}}

Variables are automatically detected and displayed in the Variables section.

Testing

- Fill in variable values

- Click Run to test

- Review the response

- Continue the conversation using the chat input

- Click Save to create a new version

Version Management

Each time you save in the Playground, a new version is created:

- Latest - The most recently saved version

- Live - The version used by API calls (set in Versions tab)

Tabs

- Details - View usage analytics, API evaluation settings, and prompt metadata

- Logs - See all inference executions with inputs, outputs, and evaluation results

- Playground - Interactively test and develop your prompt

- Test Runs - View batch test history

- Versions - Manage version history and set Live version

Best Practices

- Use descriptive names and descriptions

- Start with the Playground before using in production

- Create versions strategically at significant milestones

- Set up API evaluation early

- Use variables for flexibility

- Monitor usage analytics regularly