LLM Providers

Overview

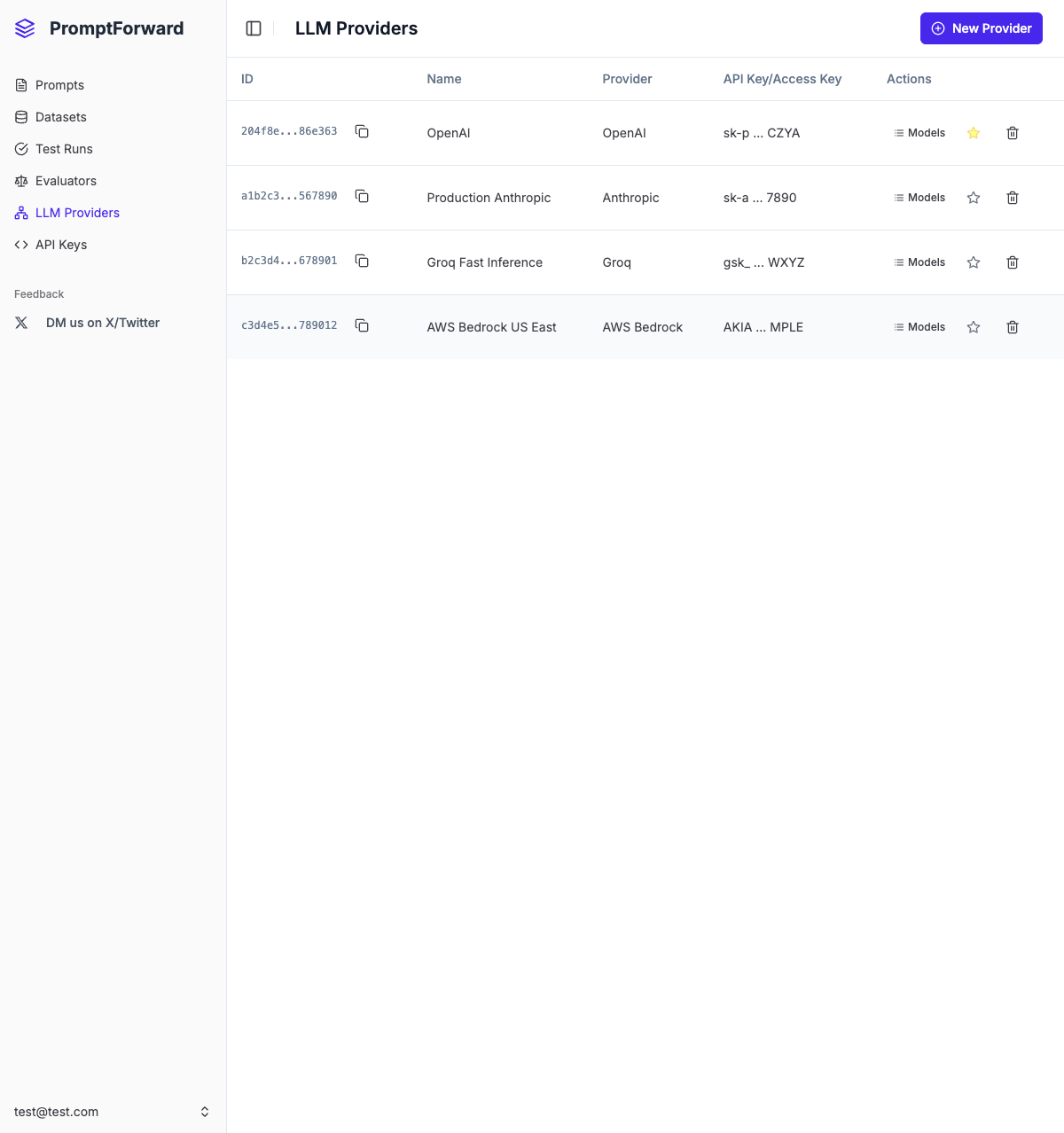

LLM Providers are the AI model connections that power your prompt testing and evaluation. Configure providers to connect to OpenAI, Anthropic, AWS Bedrock, and Groq.

Creating a Provider

For OpenAI, Anthropic, or Groq

- Click + New Provider

- Enter a descriptive name

- Select the provider from the dropdown

- Enter your API Key

- Optionally enter a custom Base URL

- Optionally toggle Set as default provider

- Click Test Connection to verify

- Click Create Provider

For AWS Bedrock

- Click + New Provider

- Enter a descriptive name

- Select AWS Bedrock from the dropdown

- Enter your AWS Access Key ID

- Enter your AWS Secret Access Key

- Enter your AWS Region (e.g., us-east-1)

- Optionally toggle Set as default provider

- Click Test Connection

- Click Create Provider

Supported Providers

AWS Bedrock

Use for: Amazon Bedrock models (Claude, Titan, Llama, etc.)

Setup: Configure IAM user with Bedrock permissions in AWS Console

Managing Providers

- View Models - Click the Models button to see available models

- Set as Default - Click the star icon to make a provider default

- Delete - Click the trash icon to remove a provider

Best Practices

- Use descriptive names (e.g., "Production OpenAI GPT-4")

- Secure your credentials - never commit to version control

- Test connections before saving

- Set a default provider for quick testing

- Rotate keys regularly for security

- Delete unused providers